Welcome to the 18th edition of The Strategy Playbook, your short, direct line to AI, automation, and business strategies that win. Every issue delivers quick field-tested insights and proven frameworks you can deploy immediately. No theory, no filler, just proven plays to shorten cycles, increase conversions, and scale with control.

Alex Mont-Ros

The Strategy Ninjas

AI in Action: What You Need to Know This Week

Strategic shifts in AI that leaders and operators should act on now.

1. xAI loses two co-founders in two days amid organizational turbulence

xAI co-founders Tony Wu and Jimmy Ba announced their departures on consecutive days, joining other co-founders including Igor Babuschkin, Kyle Kosic, and Christian Szegedy who have also left the company. Elon Musk launched xAI with 11 co-founders in 2023, and the company was recently merged with SpaceX earlier this month.

Why it matters:

Rapid executive departures at a frontier AI lab signal potential organizational instability or strategic disagreements that could impact product roadmaps and competitive positioning. For enterprise buyers evaluating xAI solutions, this raises questions about continuity, technical leadership, and long-term viability.

2. OpenAI retires GPT-4o model despite user attachment, citing safety concerns

OpenAI announced it will retire GPT-4o on February 13, despite the model's popularity and role in driving consumer growth. The decision stems from concerns about the model being overly sycophantic and its links to cases of users developing psychotic delusions. The model's ability to build emotional connections proved both its strength and its liability, with OpenAI finding it difficult to contain potential harmful outcomes.

Why it matters:

This marks a critical inflection point where AI safety concerns are overriding growth metrics and user satisfaction. Enterprise AI deployments must now consider not just accuracy and capability, but psychological safety and appropriate emotional boundaries in user interactions.

3. OpenAI introduces Agent Skills framework for modular, reusable AI capabilities

OpenAI released a guide for agent skills, a system that packages reusable "skills" as versioned bundles of instructions, scripts, and assets that agents can attach to execution environments. The framework supports conditional workflows, code execution, and reproducibility while keeping system prompts lightweight and modular.

Why it matters:

This represents an architectural shift toward composable AI agents with plug-and-play capabilities rather than monolithic, prompt-heavy systems. The versioning and modularity approach mirrors modern software engineering practices, suggesting AI agents are maturing from experimental tools into production-grade systems requiring proper software lifecycle management.

Sources To Consider: Apple Newsroom | Google Gemini Updates | TechCrunch AI | Anthropic News | The Verge - Tech | AI Everything Workspace | OpenAI Research | WSJ Tech News | VentureBeat AI

The Proposal Win-Loss Analyzer

→ Pain Point:

“We lose deals but never really know why—and wins feel like luck.”

Most organizations don’t lack deal data. They lack the ability to extract patterns fast enough to change outcomes.

What leaders experience:

Win-loss reviews happen too late to help the next deal

Sales reps have theories, but no data to prove what actually works

Competitive intel scattered across CRM notes, emails, and debriefs

Pricing objections surface repeatedly with no pattern analysis

Each loss feels unique; systemic issues stay hidden

By the time you spot a trend, you’ve lost a quarter’s pipeline

Deal retrospectives become storytelling. Learning becomes anecdotal.

You need:

Rapid pattern detection across won and lost proposals

Clear visibility into what messaging resonates vs. falls flat

Competitive positioning intelligence that updates in real time

Objection tracking that identifies root causes, not symptoms

A feedback loop that improves proposals before the next pitch

Quantified understanding of what separates wins from losses

But in reality…

Deal data lives in CRM fields, email threads, call recordings, and Slack

Win-loss interviews happen inconsistently or not at all

Sales reps repeat what worked for them, not what works systematically

Competitive intelligence is tribal knowledge, not documented patterns

Proposal templates evolve slowly while markets shift quickly

You think: “Why do we keep losing to the same competitor on the same objection?”

→ Solution: The Proposal Win-Loss Analyzer

…an AI-driven workflow that extracts patterns from deal outcomes, surfaces actionable insights, and translates findings into improved positioning and messaging.

Deploy a system that:

Ingests Complete Deal Context: Pulls from CRM data, proposal documents, email threads, call transcripts, and win-loss interview notes

Extracts Structured Intelligence: AI identifies decision criteria, objections, competitive mentions, pricing discussions, and evaluation timelines

Identifies Pattern Clusters: Groups deals by similarity to reveal what actually drives outcomes beyond surface-level attribution

Generates Competitive Intelligence: Tracks how competitors position against you, what they emphasize, and where they’re vulnerable

Surfaces Actionable Recommendations: Translates patterns into specific changes for proposals, pricing discussions, and competitive responses

→ Execution Plan

1. Map Your Win-Loss Data Sources

Connect where deal intelligence lives:

CRM records (opportunity fields, close reasons, stage progression)

Proposal documents (decks, pricing, SOWs, security questionnaires)

Communication records (emails, calls, demos, Slack)

Win-loss interviews and exit surveys

Competitive mentions and feature requests

2. Build Automated Data Ingestion

Weekly automated pulls:

Closed deals (won + lost) from CRM

Associated documents from Drive/SharePoint

Email threads and call transcripts

Competitive mentions flagged in tools

Standardize: deal size, industry, competitor, cycle length, close reason

3. Create Pattern Extraction Prompts

AI analyzes each deal for:

Decision drivers → Objection categories → Competitive positioning → Messaging resonance → Evaluation process → Deal velocity factors

Output: Structured JSON with categorized findings + confidence scores

4. Synthesize Cross-Deal Patterns

AI identifies patterns across 30-90 days:

Win clusters: “ROI messaging + technical champion = 73% close rate vs. 41% baseline”

Loss clusters: “Competitor X’s scalability pitch wins 68% of enterprise deals”

Objection patterns: “Implementation concerns in 34% of losses; overcome with migration plans”

Pricing reality: “‘Too expensive’ actually means ‘value unclear’ 3x more often”

5. Generate Intelligence Briefs

Targeted reports by role:

Sales Leadership: Pattern shifts + playbook updates + competitive changes

Sales Reps: Winning talk tracks + objection handling with success rates

Product: Feature impact on outcomes + competitive gaps

Marketing: High-resonance messaging + claims to address

Format: Pattern + examples + action + priority

6. Close the Loop to Sales Enablement

Route insights to action:

Update proposal templates based on what resonates

Revise playbooks with proven objection handling

Optimize demos to emphasize win-correlated features

Improve pricing discussions with value-first frameworks

Refresh ROI calculators with metrics prospects care about

Track: Which changes improve subsequent win rates

→Problem Solved: From Deal Autopsies to Deal Intelligence

From “We lost because of price” to “Here’s the actual pattern and how to fix it.”

This workflow turns win-loss data into a strategic feedback loop that compounds competitive advantage.

The Result:

Faster Learning Cycles: Spot patterns within weeks, not quarters

Better Competitive Positioning: Know what actually works against each competitor

Improved Proposal Quality: Templates evolve based on what wins, not what sounds good

Quantified Sales Effectiveness: Move from “good sales rep” to “proven methodology”

Less Wasted Pipeline: Qualify out deals that match loss patterns early

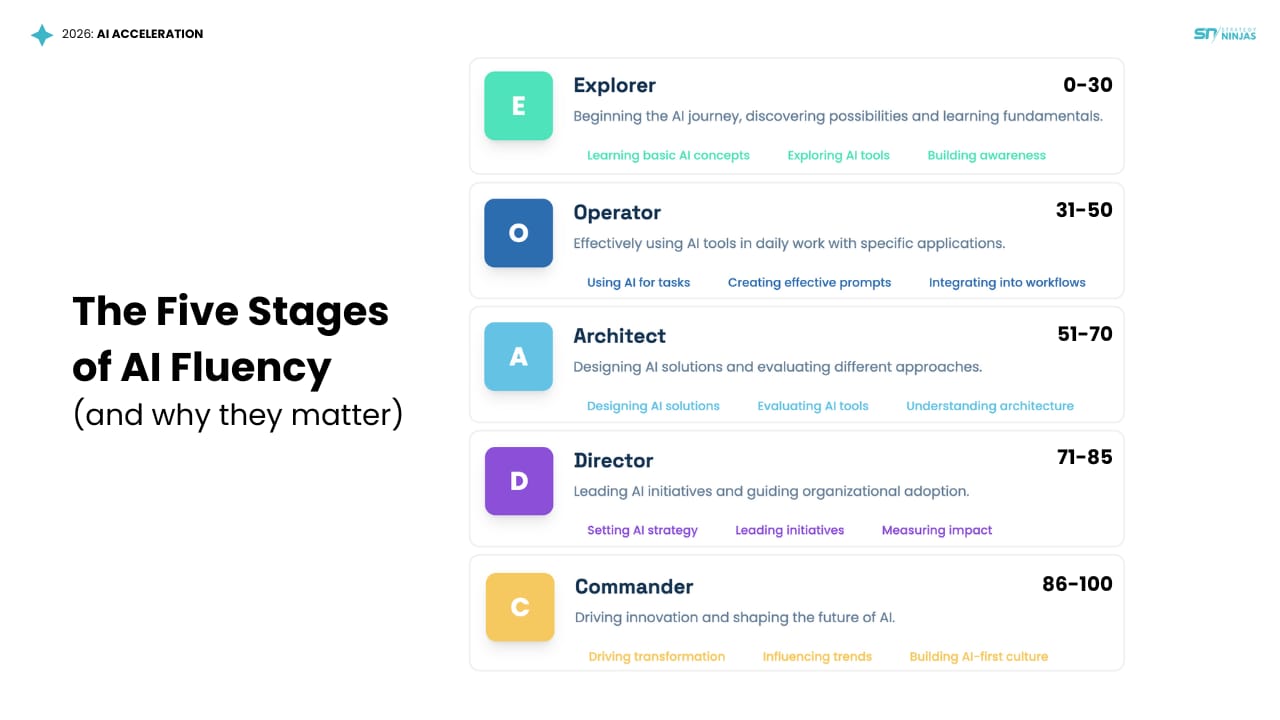

Take the AI Fluency Assessment to see where you stand and what to build next.

Where Do You Rank in AI Fluency?

Most teams are dabbling with AI. Few are deploying it with precision.

That’s why we created the Strategy Ninjas AI Fluency Assessment, to help you measure how effectively you're using AI, and exactly what to build next.

AI Fluency Ranks:

Explorer (0–30)

You're testing the limits of what's possible; the curiosity phase.Operator (31–50)

You're using AI with purpose, but still tactically, starting to build consistency.Architect (51–70)

You’re building scalable workflows, systems, and repeatable automations.Director (71–85)

You’re leading with AI, automating, integrating, and driving strategy across teams.Commander (86–100)

AI isn't just a tool, it’s a teammate. You’ve systematized strategy, scale, and leverage.

Your score isn’t about how much AI you use. It’s about how strategically you use it.

If AI has ever felt complex or uncertain, now is the time to gain clarity and confidence. Discover a straightforward path to integrating AI effectively and

advancing your business with purpose.

Join the AI Strategy Lab, a FREE community of 300+ entrepreneurs turning AI into real results.

✔️ Live Expert Calls & Collaboration – Connect with visionary leaders and innovators.

✔️ Insider Knowledge – Stay ahead with cutting-edge AI insights.

✔️ Practical Implementation Steps – Integrate AI for real business impact.

✔️ Free Resources – Access tools, templates, and strategies anytime.

If AI has ever felt overwhelming, this is your shortcut to clarity and growth.