Welcome to the 24th edition of The Strategy Playbook, your short, direct line to AI, automation, and business strategies that win.

Every issue delivers quick field-tested insights and proven frameworks you can deploy immediately. No theory, no filler, just proven plays to shorten cycles, increase conversions, and scale with control.

Alex Mont-Ros

The Strategy Ninjas

AI in Action: What You Need to Know This Week

Strategic shifts in AI that leaders and operators should act on now.

Google’s TurboQuant Compresses Models by 6x With Zero Accuracy Loss

Google Research released a compression algorithm that reduces AI memory requirements by six times and delivers up to eight times faster performance, all without sacrificing accuracy.

For operators, this means the AI you run in a few months could cost a fraction of what it does now.

OpenAI to Merge ChatGPT, Codex, and Browser Into a Single Superapp

OpenAI announced plans to collapse its ChatGPT app, Codex coding platform, and Atlas web browser into one unified desktop application, mirroring Anthropic’s bundled approach with Claude.

AI is consolidating into unified interfaces, and the winners will be the operators who know what to delegate to these platforms and what to keep in human hands.

The Real Unit of AI Transformation Is the Team

A Charter analysis argues that most AI strategies fail because they target either the whole organization or the individual and miss the middle.

When AI gets built into shared rituals, workflows, and norms at the team level, adoption compounds. Top-down mandates stall. For those waiting on company-wide AI strategies to take hold, the signal is clear: start with one team, one workflow, one win.

Sources To Consider: Apple Newsroom | Google Gemini Updates | TechCrunch AI | Anthropic News | The Verge Tech | AI Everything Workspace | OpenAI Research | WSJ Tech News

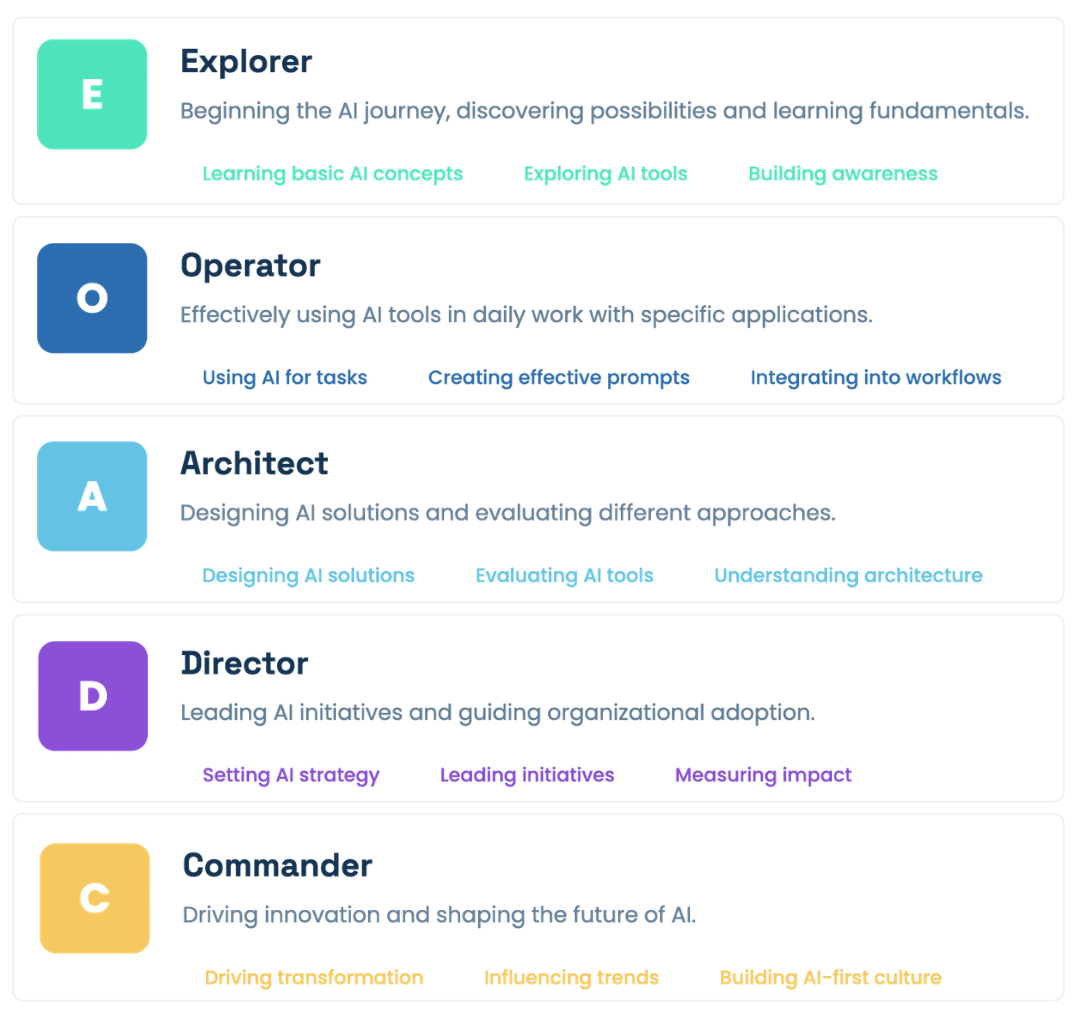

Where Do You Rank in AI Fluency?

Most professionals use AI as a digital assistant for basic tasks. The top 1% are using it as an operational engine.

Click the button above to receive your FREE AI Fluency Score™.

The Scalable Judgment Stack

Pain Point: “We’re on all the platforms, but we can’t tell if AI is actually making us better.”

AI can generate proposals in seconds. It can summarize a quarter of data in a paragraph. It can draft outreach, sort leads, and write listing descriptions faster than any human.

But it doesn’t:

Know which leads deserve your time and which are noise

Understand that this client needs a different approach than last month’s client

Recognize when a deal is slipping because the timing is wrong, not the price

See that your competitor just changed strategy and your positioning needs to shift

Prioritize what matters to your business versus what sounds impressive

You think: “I have more AI tools than ever. Why does everything still feel manual?”

Solution: The Scalable Judgment Stack

…a framework that embeds your proprietary business judgment into AI execution platforms; so the more the platform automates, the more your expertise compounds.

A system that:

Separates execution decisions (what AI handles) from judgment decisions (what you own)

Creates rules for when AI operates autonomously and when it escalates

Builds feedback loops so AI outputs improve based on your corrections

Makes your expertise the routing layer that determines which platform, model, or agent handles which task

Turns every AI interaction into a training signal for your operation

Execution Plan

1. Map Your Judgment Points

Before adding another platform, identify where human judgment actually creates value in your operation.

List every decision made in a typical client cycle from first contact to close

Mark each decision as “routine” (AI) or “judgment” (requires human expertise)

Focus on the judgment points where experience, relationships, or market intuition drive the outcome

If you can’t articulate why a decision requires you, it probably doesn’t.

2. Define Your Routing Rules

Build explicit criteria for what AI handles autonomously and what gets routed to a human.

Create a simple decision tree: if the task matches [criteria], AI executes; if not, it escalates

Set confidence thresholds: AI drafts when it’s high-confidence, flags for review when it’s not

Route by stakes, not by complexity: a simple email to a $2M client still needs your eyes.

3. Build Correction Loops

Every time you override, edit, or redirect an AI output, that correction should feed back into the system.

Track what you change and why

Store corrections in a format your AI tools can reference

Create categories: tone corrections, factual corrections, strategic redirects, timing adjustments

Use corrections to update your routing rules quarterly

The operators who treat corrections as training data build AI systems that get smarter; everyone else just edits forever.

4. Stack Your Platforms by Function

Stop using every platform for everything. Assign each tool a specific role based on what it does best.

Match platform strengths to your judgment map: high-judgment tasks go to tools with better human-in-the-loop features

Eliminate overlap; two tools doing the same job means twice the review time and half the clarity

Evaluate platforms by how well they accept and apply your corrections

A smaller, well-routed stack outperforms a bloated one every time

5. Measure Judgment ROI

Track whether your judgment layer is actually making AI more valuable over time.

Compare AI outputs before and after your corrections are applied

Track time-to-close, client satisfaction, and deal quality

Measure “escalation rate” or how often AI needs human intervention

Flag when AI outputs start drifting from your standards

The goal is not zero human involvement; it’s human involvement only where it multiplies value

Problem Solved: From Hollow AI Answers

to Judgment-Driven AI Outputs

The platforms will keep consolidating. The cost of execution will keep dropping toward zero.

But the cost of bad judgment stays the same.

The operators who build a judgment stack own something no platform can replicate.

✓ AI coaching sessions, tool updates, news & more

✓ Fresh ideas, new workflows, GPTs, Skills, and automations

✓ Recordings of each session, with lifetime membership access

AI is evolving weekly, and the fluency required to keep up isn’t holding still.

The longer you wait, the steeper the on-ramp gets.

Jargon Buster of the Week

Automatically directing a task to the AI model or tool best suited to handle it, instead of sending everything to one default system.

Why It Matters

Not every AI task needs the same engine.

Model routing matches tasks with the appropriate model, using lightweight models for simple lookups and heavyweight models for complex reasoning, cutting AI costs by 50%+ while maintaining quality.

In Practice

A brokerage routes lead qualification emails through a fast, low-cost model while sending complex market analysis requests to a more capable (and expensive) reasoning model.

The routing decision is invisible to the end user, but it controls both the quality and cost of every AI interaction in the operation.